In Java you can stumble upon two kinds of Out of Memory errors:

- The java.lang.OutOfMemoryError Java heap space error : the application tried to allocate more data into the heap space area, but there is not enough room for it. Although there might be plenty of memory available on your machine, you have hit the maximum amount of memory allowed by your JVM (-Xmx).

- The java.lang.OutOfMemoryError: Unable to create new native thread happens whenever the JVM asks for a new Thread from the OS but the underlying OS cannot allocate the thread.

What is the solution ?

To fix this issue, we recommend checking the following items:

- Firstly, check the number of Threads running in your application

- Then, check system-wide Thread settings

- Next, check the number of Processes per user

- Inspect the PID max limit

- Consider reducing the Thread Stack size

Check how many Threads are running in your application

Firstly, you need to estimate how many Threads are currently running in your application. The simplest way to do that is a command line command which uses as input the PID of the Java Process:

jstack -l JAVA_PID > dump.txt

Then, group your Threads by similar Stack Traces or Exception. This can be a clue to detect a leak in a part of your application. Learn more about Thread dumps here: How to inspect a Thread Dump like a pro

Check system-wide Threads settings

At this point, there are no evident leaks in your application. Let’s check the system-wide Threads settings.

On a Linux machine, check the /proc/sys/kernel/threads-max filesystem. This will show the threads system-wide limit.

$ cat /proc/sys/kernel/threads-max 511415

The root user can change that value if they wish to:

$ echo 600000 > /proc/sys/kernel/threads-max

You can check the current number of running threads through the /proc/loadavg filesystem:

$ cat /proc/loadavg 0.41 0.45 0.57 3/749 28174

Watch the fourth field! This field comprises two numbers separated by a slash (/):

The first of these is the number of currently executing kernel scheduling entities (processes, threads); this will be less than or equal to the number of CPUs.

The second one is the number of threads running in your system kernel. In this case, you are running 749 threads

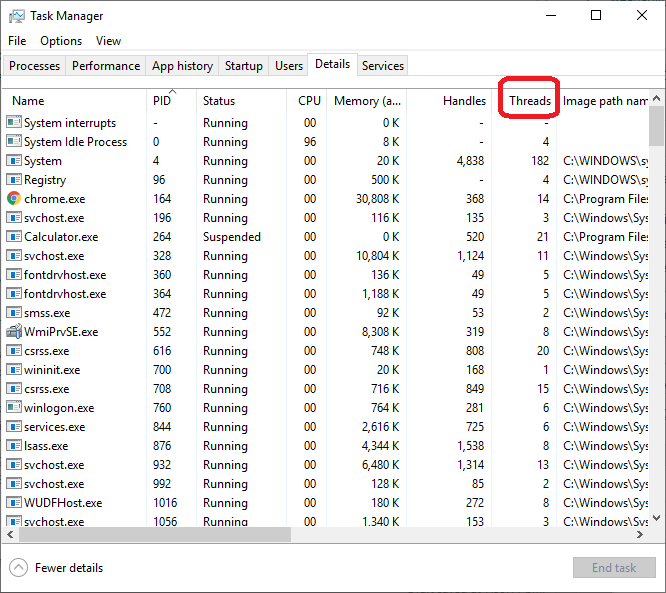

On a Windows machine, you can easily check the number of Threads from the Task Manager Threads column:

Check the number of processes per user

On a Linux box, threads are essentially processes with a shared address space. Therefore, you have to check if your OS allows you enough processes for user. This can be checked through:

$ ulimit -a core file size (blocks, -c) unlimited data seg size (kbytes, -d) unlimited scheduling priority (-e) 0 file size (blocks, -f) unlimited pending signals (-i) 125829 max locked memory (kbytes, -l) 16384 max memory size (kbytes, -m) unlimited open files (-n) 1024 pipe size (512 bytes, -p) 8 POSIX message queues (bytes, -q) 819200 real-time priority (-r) 0 stack size (kbytes, -s) 8192 cpu time (seconds, -t) unlimited max user processes (-u) 125829 virtual memory (kbytes, -v) unlimited file locks (-x) unlimited

The default number of process per users is 1024 by default. At this point we will count the number of processes running. We can count the number of running processes as follows:

$ ps -elf | wc -l 220

This number, however, does not consider the threads which can be spawned by a process. If you try to run ps with a -T you will see all of the threads as well:

$ ps -elfT | wc -l 385

As you can see, the process count increased significantly. Let’s continue our investigation. Let’s see how many Threads are spawned by your WildFly / JBoss Process. You can do it in at least two ways:

$ ps -p $JBOSSPID -lfT | wc -l

The above shell will return the number of Lightweight Processes created for a Process ID. This should match with the Thread Dump count generated by jstack:

$ jstack -l $JBOSSPID | grep tid | wc -l

Now compare the result of the ps/jstack output with the number of processes for the user.

To change the limits (f.e. to 4096 threads):

$ ulimit -u 4096

Check your PID max

The kernel attribute /proc/sys/kernel/pid_max defines the maximum numerical Process Identifier than the Kernel can assign to a process. You can check this value by executing:

$ sysctl -a | grep kernel.pid_max kernel.pid_max = 32768

Depending on your OS architecture, a fork of a new process might fail if you are using simultaneously more than pid_max processes.

Reduce the Thread Stack size

Another option which you can use, if you are not able to modify the OS settings is reducing the stack size. The JVM has an interesting implementation, by which the more memory is allocated for the heap (not necessarily used by the heap), the less memory available in the stack, and since threads are made from the stack, in practice this means more “memory” in the heap sense (which is usually what people talk about) results in less threads being able to run concurrently.

First of all check the default Thread Stack size which is dependent on your Operating System:

$ java -XX:+PrintFlagsFinal -version | grep ThreadStackSize intx ThreadStackSize = 1024

As you can see, the default Thread Stack Size is 1024 kb in our machine. In order to reduce the stack size, add “-Xss” option to the JVM options. In JBoss EAP 6 / WildFly the minimum Thread stack size is 228kb. You can change it in Standalone mode by varying the JAVA_OPTS as in the following example:

JAVA_OPTS="-Xms128m -Xmx1303m -Xss256k"

In Domain Mode, you can configure the jvm element at various level (Host, Server Group, Server). There you can set the requested Stack Size as in the following section:

<jvm name="default">

<heap size="64m" max-size="256m"/>

<jvm-options>

<option value="-server"/>

<option value="-Xss256k"/>

</jvm-options>

</jvm>

Conclusion

In summary, the java.lang.OutOfMemoryError: unable to create new native thread error can occur due to lack of system resources. By understanding the cause of the problem and implementing solutions such as increasing stack size, reducing the number of threads, increasing ulimit, increasing memory, monitoring system resources and checking for leaks, you can effectively resolve this issue and ensure that your Java application runs smoothly.

Found the article helpful? if so please follow us on Socials