This article provides a comparison between OpenShift and Kubernetes container management project covering both management and development areas.

First of all, some definitions.

- Red Hat OpenShift is an Enterprise Open Source Container Orchestration platform. It’s a software product that includes components of the Kubernetes container management project but adds productivity and security features which are important to large-scale companies.

So, in a nutshell, OpenShift Container platform focuses on an enterprise user experience. It’s designed to provide everything a full-scale company may need to orchestrate containers—adding enhanced security options and professional support—and to integrate directly into enterprises’ IT stacks. - Kubernetes, on the other hand, is a portable, extensible, open-source platform for managing containerized workloads and services, that facilitates both declarative configuration and automation. Kubernetes features a large and rapidly growing ecosystem. Kubernetes services, support, and tools are widely available.

So, it is worth mentioning that at the heart of OpenShift there is Kubernetes, so in terms of API, what you have in Kubernetes is also fully included in OpenShift. That being said, let’s compare Openshift with Kubernetes

Product vs Project

- Kubernetes is an open source project, while OpenShift is a product, supported by Red Hat, which comes in several variants.

Broadly speaking Red Hat OpenShift is available as Hosted Service (in several variants such as Dedicated OpenShift, OpenShift clusters hosted on Microsoft Azure, OpenShift on IBM’s public cloud) and as Self-Managed Service (On your own infrastructure). - OpenShift derives from OKD, which is the Community Distribution of Kubernetes that powers Red Hat OpenShift. OKD is free to use and includes most of the features of its commercial product, but you cannot buy support nor you cannot use Red Hat-based official images.

Installation

Red Hat OpenShift Container Platform is fully supported running on Red Hat Enterprise Linux as well as Red Hat Enterprise Linux CoreOS. To be precise, on OCP 4.13 the supported OS are:

- Red Hat Enterprise Linux (RHEL) 8.6, 8.7, and 8.8

- Red Hat Enterprise Linux CoreOS (RHCOS) 4.13

Kubernetes, being an open source project, can be installed almost on any Linux distribution such as Debian, Ubuntu, and many others.

It is worth noting that a Linux distro called K3OS has been built for the sole purpose of running Kubernetes clusters. In fact, it is a Linux distro and the k3s Kubernetes distro in one. As soon as you boot up a k3OS node, you have Kubernetes up and running. When you boot up multiple k3OS nodes, they form a Kubernetes cluster. K3OS is perhaps the easiest way to stand up Kubernetes clusters on any server.

Image Management

- OpenShift uses Image Streams to provide a stable pointer to an image using various identifying qualities. This means that even if the source image changes, the Image Stream will still point to the right version of the image, ensuring that your application will not break unexpectedly.

- Kubernetes, on the other hand, there is no resource responsible for building container image. There are a couple of choices you have when you want to build container images for Kubernetes using external tools, scripts, or Kubernates’ internal resources. In almost all cases, you will end up using the plain old docker build command.

Local Execution

If you want to test/develop locally your container orchestration platform some tooling is required.

- OpenShift users can use Red Hat OpenShift Local which brings a minimal, preconfigured OpenShift 4.1 or newer cluster to your local laptop or desktop computer for development and testing purposes. CodeReady Containers is delivered as a Red Hat Enterprise Linux virtual machine that supports native hypervisors for Linux, macOS, and Windows 10. For OpenShift 3.x clusters, the recommended solution was to use Minishift.

- Kubernetes users can use Minikube which is a tool that makes it easy to run Kubernetes locally. Minikube runs a single-node Kubernetes cluster inside a Virtual Machine (VM) on your laptop for users looking to try out Kubernetes or develop with it day-to-day.

Command Line

- Kubernetes provides a command-line interface named kubectl which is used to run commands against any Kubernetes cluster.

Since OpenShift Container Platform runs on top of a Kubernetes cluster, a copy of kubectl is also included with “oc“, OpenShift Container Platform’s default command-line interface (CLI). - OpenShift uses the “oc” binary offers the same capabilities as the kubectl binary, but it is further extended to natively support OpenShift Container Platform features, such as: Full support for OpenShift resources, authentication, and additional developer-oriented commands such as oc-new app which creates all required objects with a single command and lets you decide to export them or change or store somewhere in your repository.

Security

Since OpenShift is a supported product, there are stricter security policies. As an example, OpenShift forbids (by default) to run container images as root so many images available on DockerHub won’t be able to run out of the box.

In terms of authentication, requests to the OpenShift Container Platform API are authenticated using OAuth Access Tokens or X.509 Client Certificates

There are three types of OpenShift users:

- Regular users, which are created automatically in the system upon the first login or can be created via the API.

- System users, which are created when the infrastructure is defined, mainly to enable the infrastructure to interact with the API securely.

- Service accounts, which are special system users associated with projects.

The OpenShift Container Platform master also includes a built-in OAuth server. Users obtain OAuth access tokens to authenticate themselves to the API.

OpenShift 4.3 also delivers FIPS (Federal Information Processing Standard) compliant encryption and additional security enhancements to enterprises across industries. Combined, these new and extended features can help protect sensitive customer data with stronger encryption controls and improve the oversight of access control across applications and the platform itself.

Worth mentioning that, in terms of authentication to external apps, OpenShift can leverage authentication to multiple applications (Jenkins, EFK, Prometheus) with a single account using OAuth reverse proxies running as sidecards. This allows to perform zero-configuration OAuth when run as a pod in OpenShift and is able to perform simple authorization checks against the OpenShift and Kubernetes RBAC policy engine to grant access.

On the other hand, Kubernetes features a more basic security approach.

All Kubernetes clusters have two categories of users: Service accounts managed by Kubernetes, and normal users. Service accounts are tied to a set of credentials stored as Secrets, which are mounted into pods allowing in-cluster processes to talk to the Kubernetes API. In contrast, any user that presents a valid certificate signed by the cluster’s certificate authority (CA) is considered authenticated.

Kubernetes uses client certificates, bearer tokens, an authenticating proxy, or HTTP basic auth to authenticate API requests through authentication plugins.

Finally, both and Kubernetes as OpensShift uses Role-based access control (RBAC) objects to determine whether a user is allowed to perform a given action within a project.

Networking

- Kubernetes abstractly ensures that Pods are able to network with each other, and allocates each Pod an IP address from an internal network. This ensures all containers within the Pod behave as if they were on the same host. Giving each Pod its own IP address means that Pods can be treated like physical hosts or virtual machines in terms of port allocation, networking, naming, service discovery, load balancing, application configuration, and migration.

- OpenShifts, on the other hand, offers its native networking solution to the users. More in detail, OpenShift uses a software-defined networking (SDN) approach to provide a unified cluster network that enables the communication between Pods across the OpenShift Container Platform cluster. This Pod network is established and maintained by the OpenShift SDN, which configures an overlay network using Open vSwitch (OVS).

OpenShift SDN provides multiple SDN modes for configuring the Pod network: A network policy mode (the default) allows project administrators to configure their own isolation policies using NetworkPolicy objects. The multitenant mode provides project-level isolation for Pods and Services in the entire cluster. The subnet mode provides a flat Pod network where every Pod can communicate with every other Pod and Service. The network policy mode provides the same functionality as the subnet mode.

Worth mentioning that OpenShift Container Platform has a built-in DNS so that the services can be reached by the service DNS as well as the service IP/port.

Ingress vs Route

Although pods and services have their own IP addresses on Kubernetes, these IP addresses are only reachable within the Kubernetes cluster and not accessible to the outside clients.

In order to allow accessing your pod and services from outsides, Kubernetes can use the Ingress object in Kubernetes to signal the Kubernetes platform that a certain service needs to be accessible to the outside world and it contains the configuration needed such as an externally-reachable URL, SSL, and more.

On the OpenShift side, it is possible to use Routes for this purpose. When a Route object is created on OpenShift, it gets picked up by the built-in HAProxy load balancer in order to expose the requested service and make it externally available with the given configuration. It’s worth mentioning that although OpenShift provides this HAProxy-based built-in load-balancer, it has a pluggable architecture that allows admins to replace it with NGINX (and NGINX Plus) or external load-balancers like F5 BIG-IP.

OpenShift Route has more capabilities as it can cover additional scenarios such as TLS re-encryption for improved security, TLS passthrough for improved security, Multiple weighted backends (split traffic), Generated pattern-based hostnames and

Wildcard domains.

Web console

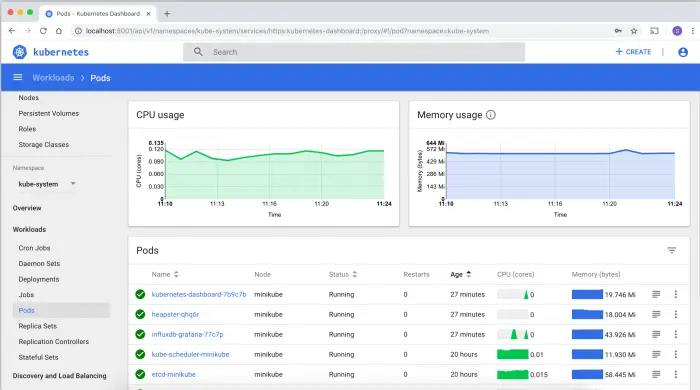

Kubernetes features a Dashboard which is a web-based Kubernetes user interface. You can use it to deploy containerized applications to a Kubernetes cluster, troubleshoot your containerized application, and manage the cluster resources. Overall, the Kubernetes console is mostly focused on Kubernetes resources (Pods, Deployments, Jobs, DaemonSets, etc) and does not add much information compared with the command line.

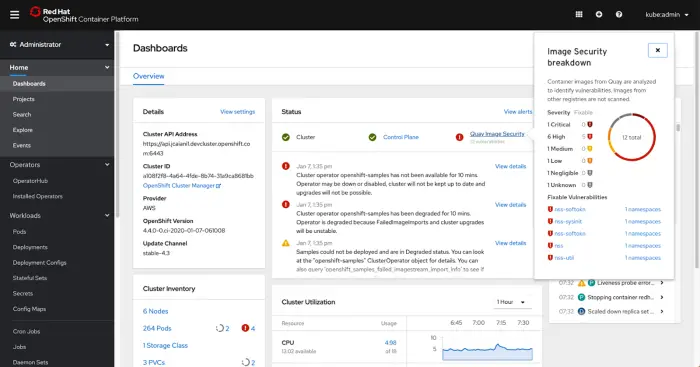

OpenShift, on the other hand, features s a graphical Web Console with both Administration and Developer perspective to allow developers to easily deploy applications to their namespaces from different sources (git code, external registries, Dockerfile, etc) and providing a visual representation of the application components to materialize how they interact together. Since OpenShift 4 is completely based around the concept of Operators, you can reach the Operator Hub directly from the OpenShift console and install Operators in your project.

Deployments

In Kubernetes, there are Deployment objects while OpenShift uses a DeploymentConfig object. The main difference is that a DeploymentConfig uses ReplicationController while a Deployment uses ReplicaSet.

Replica Set and Replication Controller do almost the same thing. Both of them ensure that a specified number of pod replicas are running at any given time. The difference comes with the usage of selectors to replicate pods. Replica Set use Set-Based selectors while replication controllers use Equity-Based selectors. In addition to that, a DeploymentConfig can use hooks to capture an update in your environment (e.g. a change in the database schema). A Deployment is not able to use hooks, however, it supports concurrent updates so that you can have many of them and it will manage to scale them properly.

CI/CD Pipeline

In modern software projects, many teams utilize the concept of Continuous Integration (CI) and Continuous Delivery (CD). By setting up a toolchain that continuously builds, tests, and stages software releases, a team can ensure that their product can be reliably released at any time. OpenShift can be an enabler in the creation and management of this toolchain.

OpenShift uses OpenShift Pipelines as a solution to build cloud-native CI/CD pipelines on the top of Kubernetes. OpenShift Pipelines is the OpenShift fork of Continuous Delivery Foundation project TektonCD-Pipelines and can be easily plugged in OpenShift using the OpenShift-Pipelines-Operator.

On top of that, you can install the Tekton CLI tkn. This way you can work with Tekton Tasks, Pipelines, PipelineRuns etc using ‘kubectl’ or ‘oc’. However, ‘tkn’ offers you an elegant customized CLI experience.

On the other hand, Kubernetes does not provide a built-in solution for CI/CD Pipelines so you can plug into any solution as long as they can be packaged in a container. To name a few, the following solutions are worth mentioning:

- Jenkins: Jenkins is the most popular and the most stable CI/CD platform. It has also been used (and still) used by OpenShift developers due to its vast ecosystem and extensibility. If you plan to use it with Kubernetes, it’s recommended to install the official plugin JenkinsX which is a version of Jenkins suited specifically for the Cloud Native world.

- Spinnaker: Spinnaker is a CD platform for scalable multi-cloud deployments, with backing from Netflix. To install it, we can use the relevant Helm Chart.

- Drone: This is a versatile, cloud-native CD platform with many features. It can be run in Kubernetes using the associated Runner.

- GoCD: Another CI/CD platform from Thoughtworks that offers a variety of workflows and features suited for cloud-native deployments. It can be run in Kubernetes as a Helm Chart.

Additionally, there are cloud services that work closely with Kubernetes and provide CI/CD pipelines like CircleCI and Travis, so it’s equally helpful if you don’t plan to have hosted CI/CD platforms.

Conclusion

Both Kubernetes and OpenShift are popular container management systems. While Kubernetes helps automate application deployment, scaling, and operations, OpenShift is the container platform that works with Kubernetes to help applications run more efficiently.

Being an open-source project, Kubernetes can be more flexible as container orchestration platform (for example you can choose the Linux distribution to use or opt for multiple CI/CD Pipeline options). OpenShift, on the other hand, does provide additional services to simplify application deployment, handle log management, registry, build automation, CI/CD and it has Enterprise-grade support by Red Hat.