An OutOfMemoryError: Direct buffer memory is a common error that Java developers encounter when working with applications that use large amounts of direct memory. Direct memory is allocated outside of the Java heap, and allows storing data that is needed by native libraries or I/O operations. In this tutorial, we will explore some common causes of this error and provide some troubleshooting steps.

Understanding the error message

The first step in troubleshooting an OutOfMemoryError: Direct buffer memory is to understand the error message.

This error message indicates that the Java Virtual Machine (JVM) has run out of direct buffer memory. Direct buffer memory is allocated using the java.nio.ByteBuffer class, and is used to store data that is needed by native libraries or I/O operations.

The java.nio.DirectByteBuffer class is special implementation of java.nio.ByteBuffer that has no byte[] laying underneath. The main feature of DirectByteBuffer is that JVM will try to natively work on allocated memory without any additional buffering so operations performed on it may be faster then those performed on ByteBuffers with arrays lying underneath.

We can allocate such ByteBuffer by calling:

ByteBuffer directBuffer = ByteBuffer.allocateDirect(64);

When such an object is created via the ByteBuffer.allocateDirect() call, it allocates the specified amount (capacity) of native memory using malloc() OS call. This memory is released only when the given DirectByteBuffer object is garbage collected and its internal “cleanup” method is called (the most common scenario), or when this method is invoked explicitly via getCleaner().clean().

Symptoms of the Direct Buffer Memory issue

As we said, the Direct Buffers are allocated to native memory space outside of the JVM’s established heap/perm gens. If this memory space outside of heap/perm is exhausted, the java.lang.OutOfMemoryError: Direct buffer memory Error will be throw.

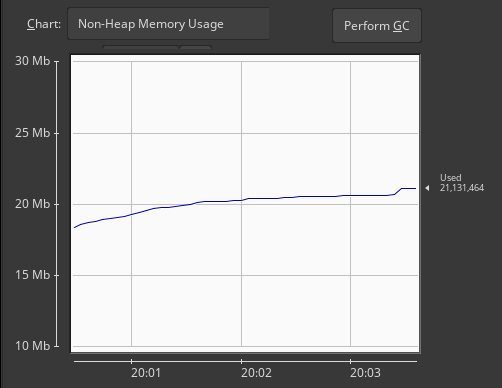

A good runtime indicator of a growing Direct Buffers allocation is the size of Non-Heap Java Memory usage, which can be collected with any tool, like jconsole:

In terms of Operating System, the amount of Memory used by a Java process includes the following elements: Java Heap Size + Metaspace + CodeCache + DirectByteBuffers + Jvm-native-c++-heap.

You can obtain this information using the following command:

pmap -x [PID]

The above command will display the amount of RSS (in KB) for the process, as you can see from the third column of the output:

total kB 14391640 12343808 12272896

Once that you know the full size of the JVM process, you have to subtract the Java Heap Size + Metaspace for a rough estimate of the JVM native memory size.

Java Native Memory Tracking

A good indicator which can be added to the JVM is the NativeMemoryTracking, which can be added through the following settings:

-XX:+UnlockDiagnosticVMOptions -XX:NativeMemoryTracking=detail -XX:+PrintNMTStatistics

When Native Memory Tracking is enable, you can request a report on the JVM memory usage using the following command:

jcmd <pid> VM.native_memory

If you check at the jcmd output, you will find at the bottom, the amount of native memory committed/used in the Internal (committed) section :

Native Memory Tracking:

Total: reserved=1334532KB, committed=369276KB

- Java Heap (reserved=524288KB, committed=132096KB)

(mmap: reserved=524288KB, committed=132096KB)

- Class (reserved=351761KB, committed=112629KB)

(classes #19111)

( instance classes #17977, array classes #1134)

(malloc=3601KB #66765)

(mmap: reserved=348160KB, committed=109028KB)

( Metadata: )

( reserved=94208KB, committed=92824KB)

( used=85533KB)

( free=7291KB)

( waste=0KB =0.00%)

( Class space:)

( reserved=253952KB, committed=16204KB)

( used=12643KB)

( free=3561KB)

( waste=0KB =0.00%)

- Thread (reserved=103186KB, committed=9426KB)

(thread #100)

(stack: reserved=102712KB, committed=8952KB)

(malloc=352KB #524)

(arena=122KB #198)

- Code (reserved=249312KB, committed=23688KB)

(malloc=1624KB #7558)

(mmap: reserved=247688KB, committed=22064KB)

- GC (reserved=71049KB, committed=56501KB)

(malloc=18689KB #13308)

(mmap: reserved=52360KB, committed=37812KB)

- Compiler (reserved=428KB, committed=428KB)

(malloc=302KB #923)

(arena=126KB #5)

- Internal (reserved=1491KB, committed=1491KB)

(malloc=1451KB #4873)

(mmap: reserved=40KB, committed=40KB)

- Other (reserved=1767KB, committed=1767KB)

(malloc=1767KB #50)

- Symbol (reserved=21908KB, committed=21908KB)

(malloc=19503KB #252855)

(arena=2406KB #1)

- Native Memory Tracking (reserved=5914KB, committed=5914KB)

(malloc=349KB #4947)

(tracking overhead=5565KB)

Setting MaxDirectMemorySize

There is a JVM parameter named -XX:MaxDirectMemorySize which allows to set the maximum amount of memory which can be reserved to Direct Buffer Usage. As a matter of fact, for JDK 8, this value is set to 64MB:

private static long directMemory = 64 * 1024 * 1024;

However, by digging into sun.misc.VM you will see that, if not configured, it derives its value from Runtime.getRuntime.maxMemory(), thus the value of –Xmx. So if you don’t configure -XX:MaxDirectMemorySize and do configure -Xmx2g, the “default” MaxDirectMemorySize will also be 2 Gb, and the total JVM memory usage of the app (heap+direct) may grow up to 2 + 2 = 4 Gb.

Collecting the Heap Dump

Even if the DirectByteBuffer is allocated outside of the JVM Heap, the JVM still provides important hints. In fact, when the JVM requests a DirectByteBuffer, there will be a reference to it in the Heap.

From the Heap Dump, you can therefore check the amount, we can check how much native memory these DirectByteBuffers are using.

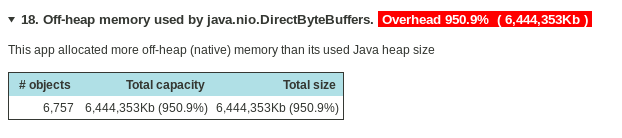

If you are using an advanced tool like JXRay report (https://jxray.com/), it’s enough to load your Heap dump and it will automatically pinpoint to your Off-Heap memory dump, with the amount of information already calculated:

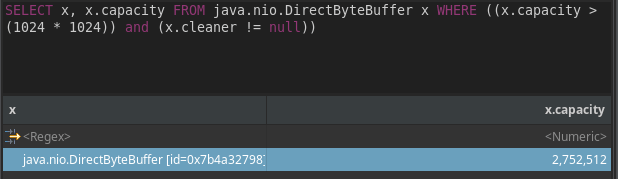

With another tool like Eclipse Mat, you have to calculate it yourself by using the following OQL experssion:

SELECT x, x.capacity FROM java.nio.DirectByteBuffer x WHERE ((x.capacity > 1024 * 1024) and (x.cleaner != null))

The above query will list all DirectByteBuffer which have been allocated and not released and whose capacity is bigger than 1MB.

Checking the Reference chain.

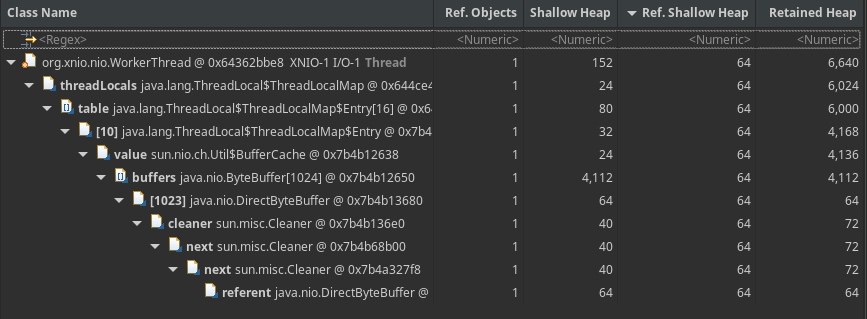

After that we have checked how much native memory your DirectByteBuffers are using, next step will be checking through the reference chain and try to understand who’s holding the ByteBuffers.

Still using Eclipse Mat, you can right-click on the result of your OQL (x.capacity field) and choose “merge shortest path to GC roots“. That will show you which class is holding the memory for the DirectBuffer thus preventing it from being garbage-collected:

So, in this case you have your XNIO worker threads holding a reference to your DirectBuffers. This might be either a a temporary problem or a bug.

If it’s a temporary problem (such as a spike in native memory which gradually reduces), that might be something you can tune, for example by reducing the number of io threads used by your application.

In WildFly / JBoss EAP the number of io-threads to create for your workers is configued in the io subsystem:

/subsystem=io/worker=default/:read-resource(recursive=false)

{

"outcome" => "success",

"result" => {

"io-threads" => undefined,

"stack-size" => 0L,

"task-keepalive" => 60,

"task-max-threads" => undefined

}

}

If not specified, a default will be chosen, which is calculated by cpuCount * 2

Another option is to configure a limit per-thread DirectByteBuffer size using the -Djdk.nio.maxCachedBufferSize JVM property

-Djdk.nio.maxCachedBufferSize

The above JVM property will limit the per-thread DirectByteBuffer size.

Finally, if are using WildFly application server or JBoss EAP, a more drastic solution is to disable direct buffers, at the expense of an increased Heap usage:

/subsystem=io/buffer-pool=default:write-attribute(name=direct-buffers,value=false)

Out of Memory caused by allocation failures

When using G1GC (the default Garbage collector since Java 11) there are additional options to manage an allocation failure. First of all some definitions: a GC allocation failure means that the garbage collector could not move objects from young gen to old gen fast enough because it does not have enough memory in old gen. In order to address this issue there are some potential solutions which include:

- Increasing the number of concurrent marking threads by setting ‘-XX:ConcGCThreads’ value. Increasing the number of Concurrent Marking Threads will make garbage collection run fast at the price of an higher CPU cost.

- You can force the G1 Garbage Collector to start the Marking phase earlier by lowering ‘-XX:InitiatingHeapOccupancyPercent’ value. The default value for it is 45 which means the G1 GC marking phase will begin only when heap usage reaches 45%. By reducing this value, the G1 GC marking phase will start earlier so that Full GC can be avoided.

- Set -XX:+UseG1GC -XX:SoftRefLRUPolicyMSPerMB=1 .This will enable immediate flushing of softly referenced objects in the JVM options. As it turns out, the Direct Buffers as stored outside the Heap and a reference to them is generally held as a PhantomReference in the tenured generation. If there’s no pressure to run a Garbage collector on the tenured generation you might hit an Out of Memory because of the accumulation of soft references in the tenured generation.

Tuning glibc

glibc is the default native memory allocator for Java applications. The objects allocated by glibc may not be returned once it’s freed for performance improvement. This performance improvement, however, comes to the price of an increased memory fragmentation. The fragmentation can grow unboundedly eventually causing an Out of Memory.

MALLOC_ARENA_MAX is an environment variable to control how many memory pools can be created for glibc. By default, it is 8 * CPU cores. You can experiment reducing this value to 2 or 1 and see if the Out of Memory issue is gone. The lower this value, the less number of memory pools will be created (at the expenses of a reduced performance).

export MALLOC_ARENA_MAX=1

Explicit Garbage Collection disabled?

In some cases, it can be that memory allocated by direct buffers may accumulate for a long time before it is collected. In the long run that’s not really a leak, but it will increase peak memory usage. In this case, the explicit Garbage collection (done with System.gc()) is there to free buffers when the reserveMemory limit is hit.

The OpenJDK invokes System.gc() during direct ByteBuffer allocation to provide a hint and hope for timely reclamation of directly memory by the GC

So, it is worth checking if you are using DisableExplicitGC in your JVM settings:

-XX:+DisableExplicitGC

(Reference: https://stackoverflow.com/questions/32912702/impact-of-setting-xxdisableexplicitgc-when-nio-direct-buffers-are-used)

Check Open issues

Finally, it can be the case that the offending code is not in your code but it’s in one of the dependencies you are using. So it is worth checking for some known issues for frameworks using DirectByteBuffer such as netty:

https://issues.redhat.com/browse/NETTY-424

Also, check if your specific version of the application server (WildFly / EAP ) needs to be upgraded to fix an older issue for the DirectByteBuffer.

Found the article helpful? if so please follow us on Socials